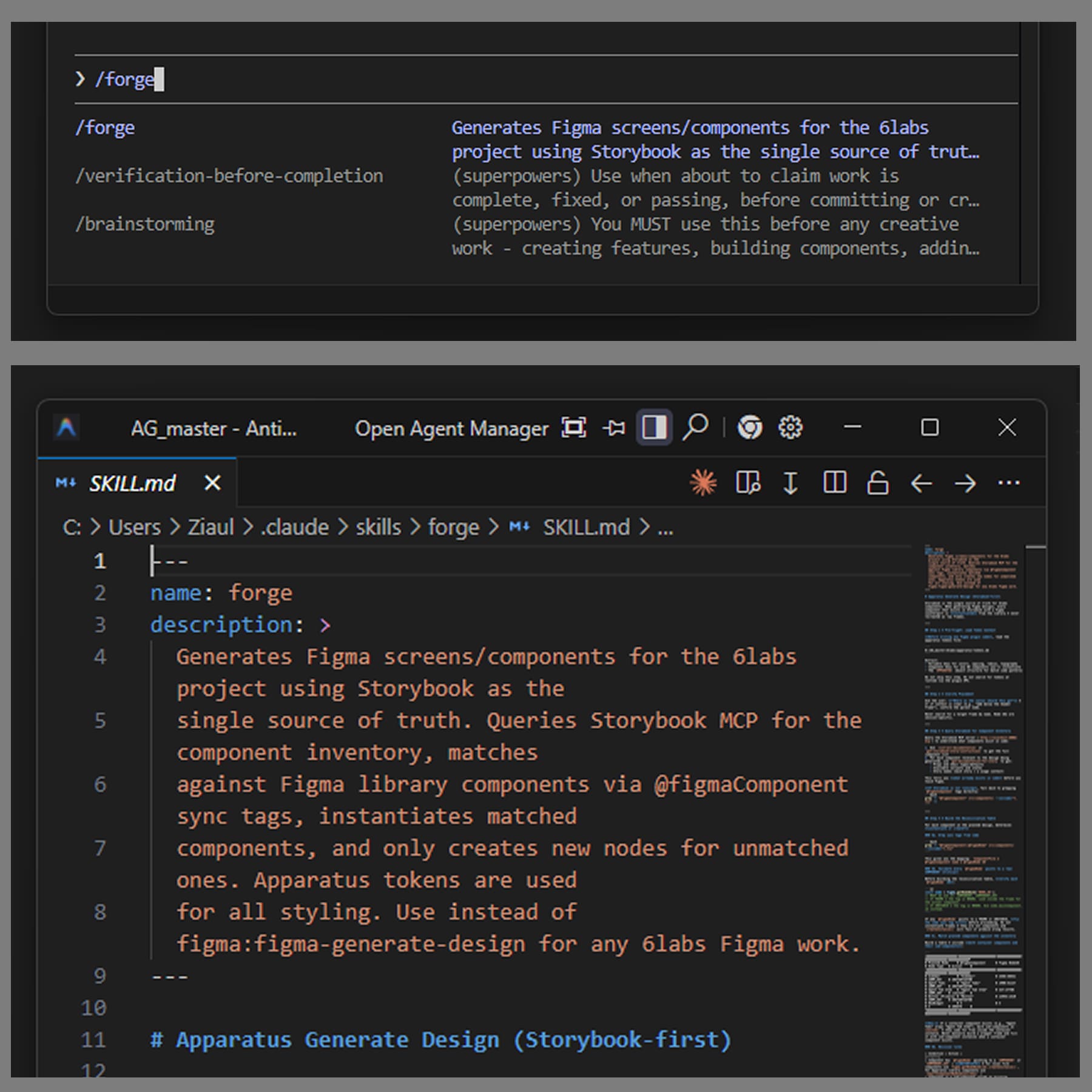

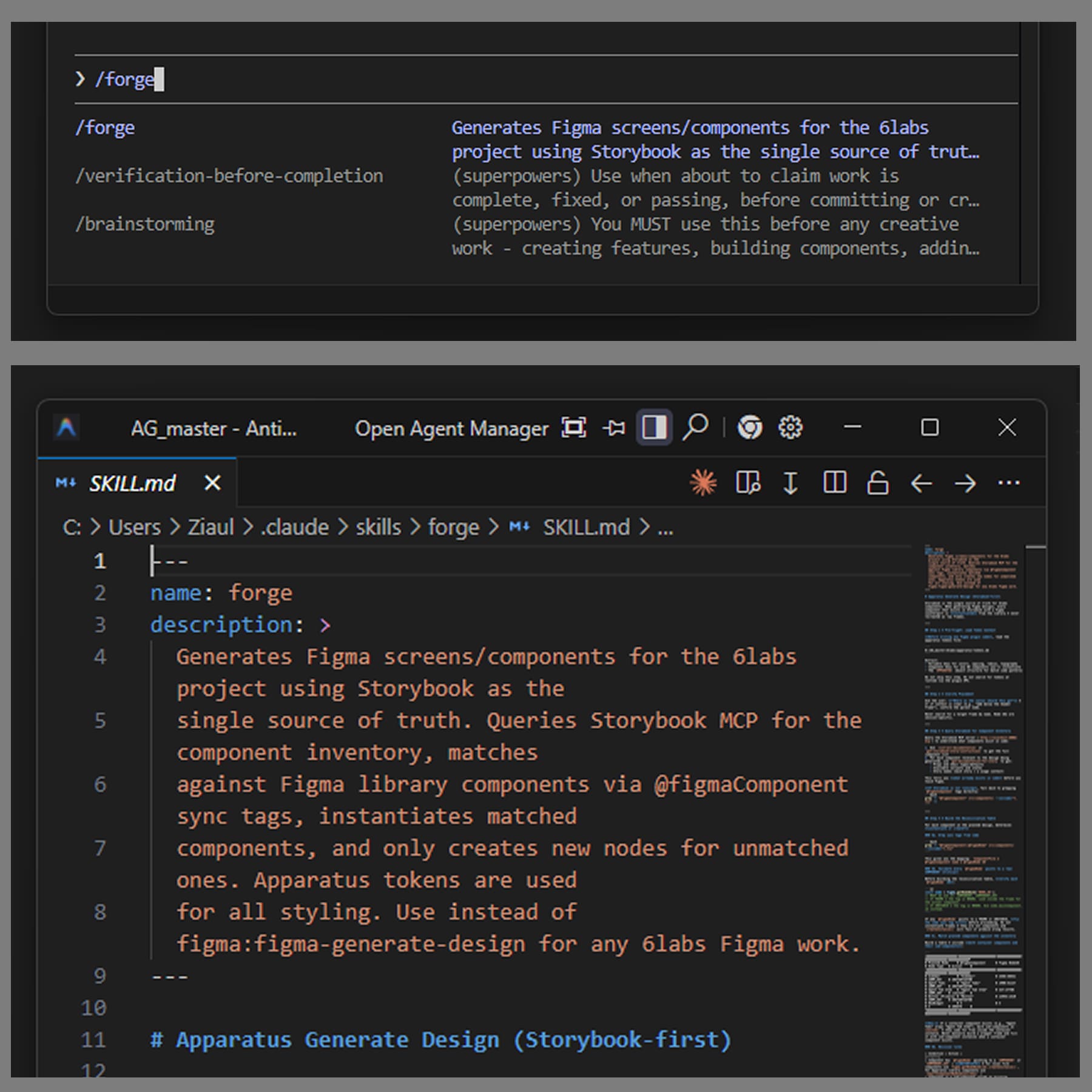

Forge

Generates Figma frames from the Storybook component inventory. Reads the live MCP, matches against existing library components via @figmaComponent sync tags, and only creates new nodes for unmatched ones. Tokens, never hex.

4 Claude Code skills + 3 Figma plugins + 3 internal workshops — built solo, end-to-end. The Figma↔code round-trip became the team default.

6labs design + PM + dev + QA — taught the loop, didn't hand it off. The skills stuck because the team was in the room while they were built.

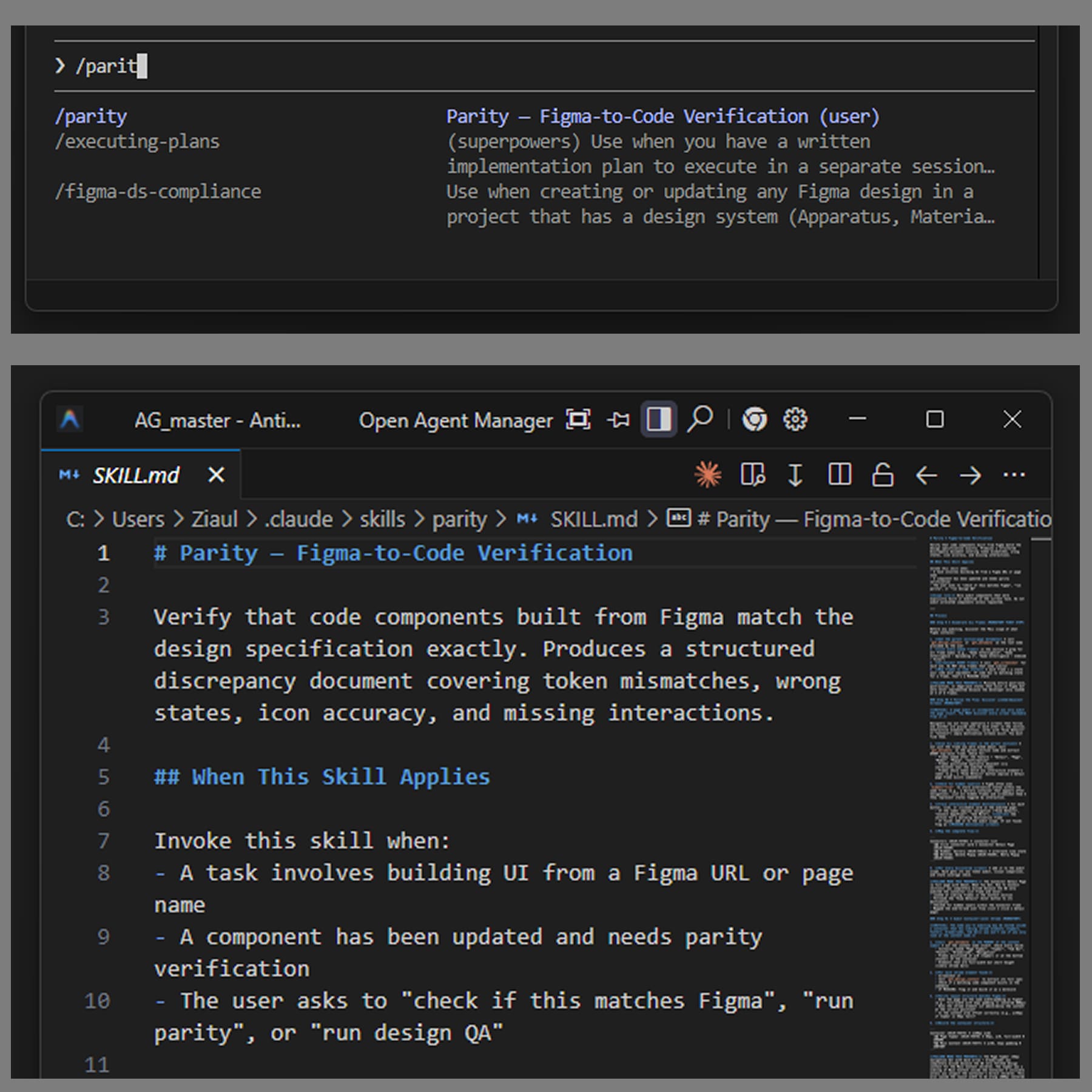

Claude Code skills shipped

Forge, Land, Tether, Parity. The four named operations of the round-trip — code → figma, figma → code, the link between them, and the visual diff that verifies it.

Figma plugins shipped

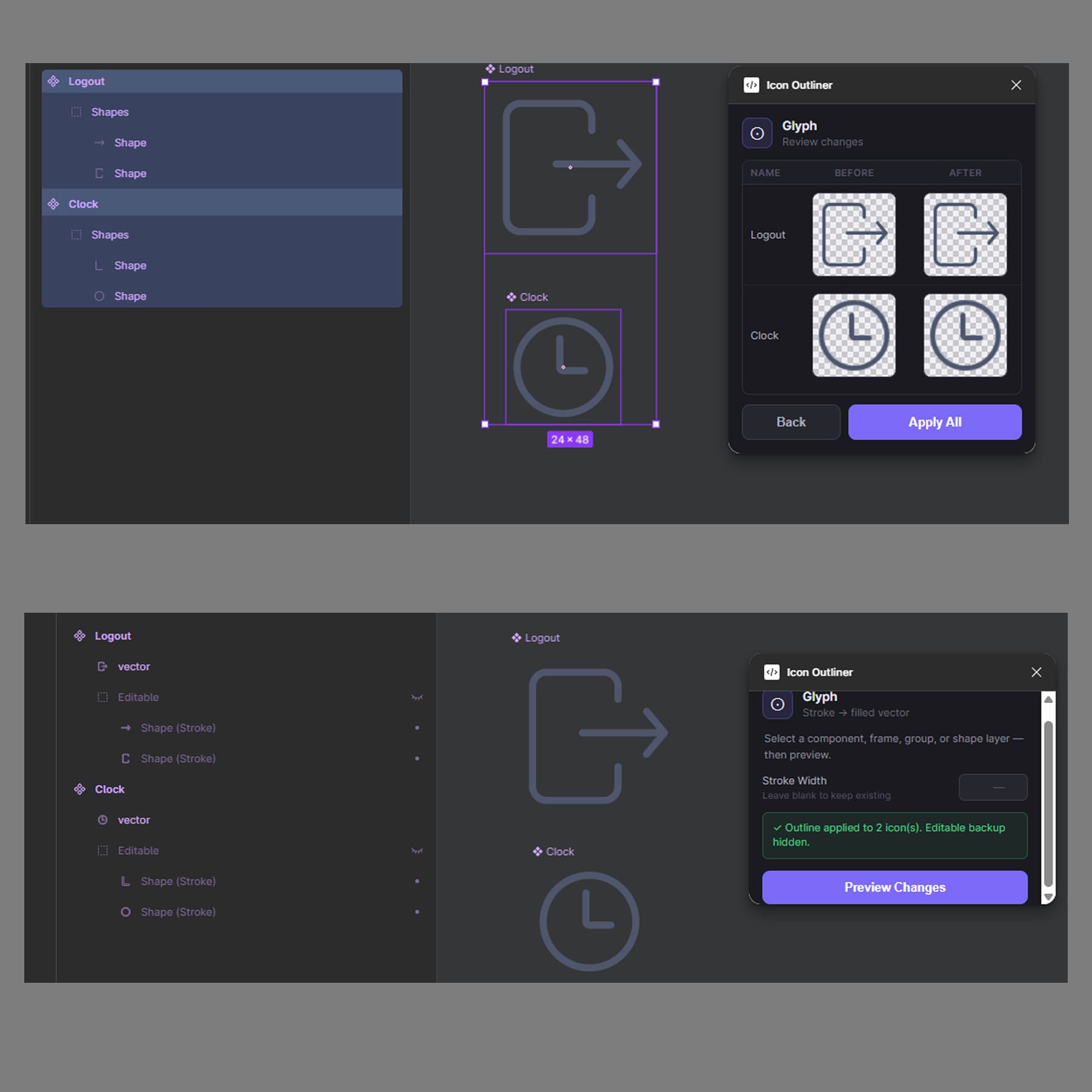

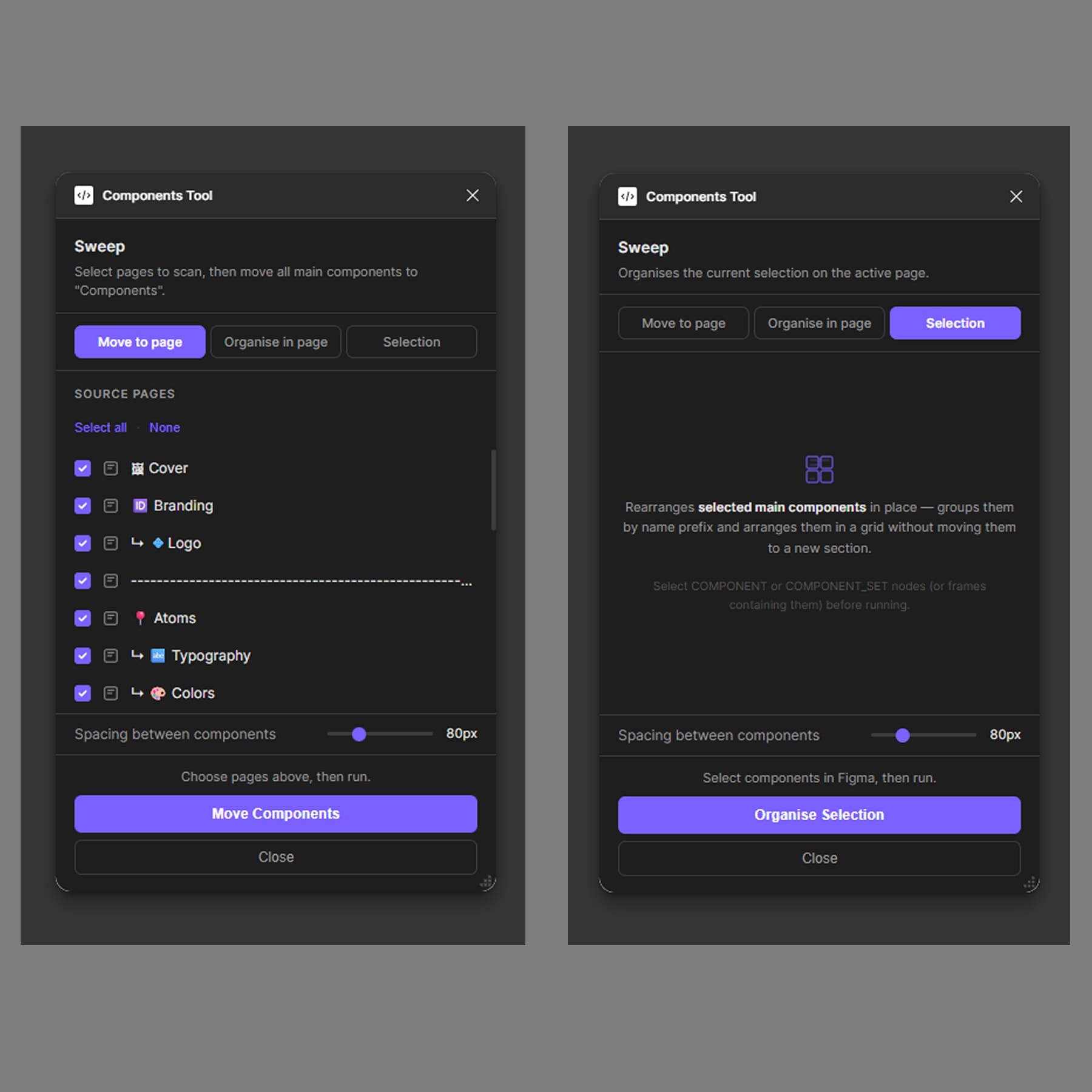

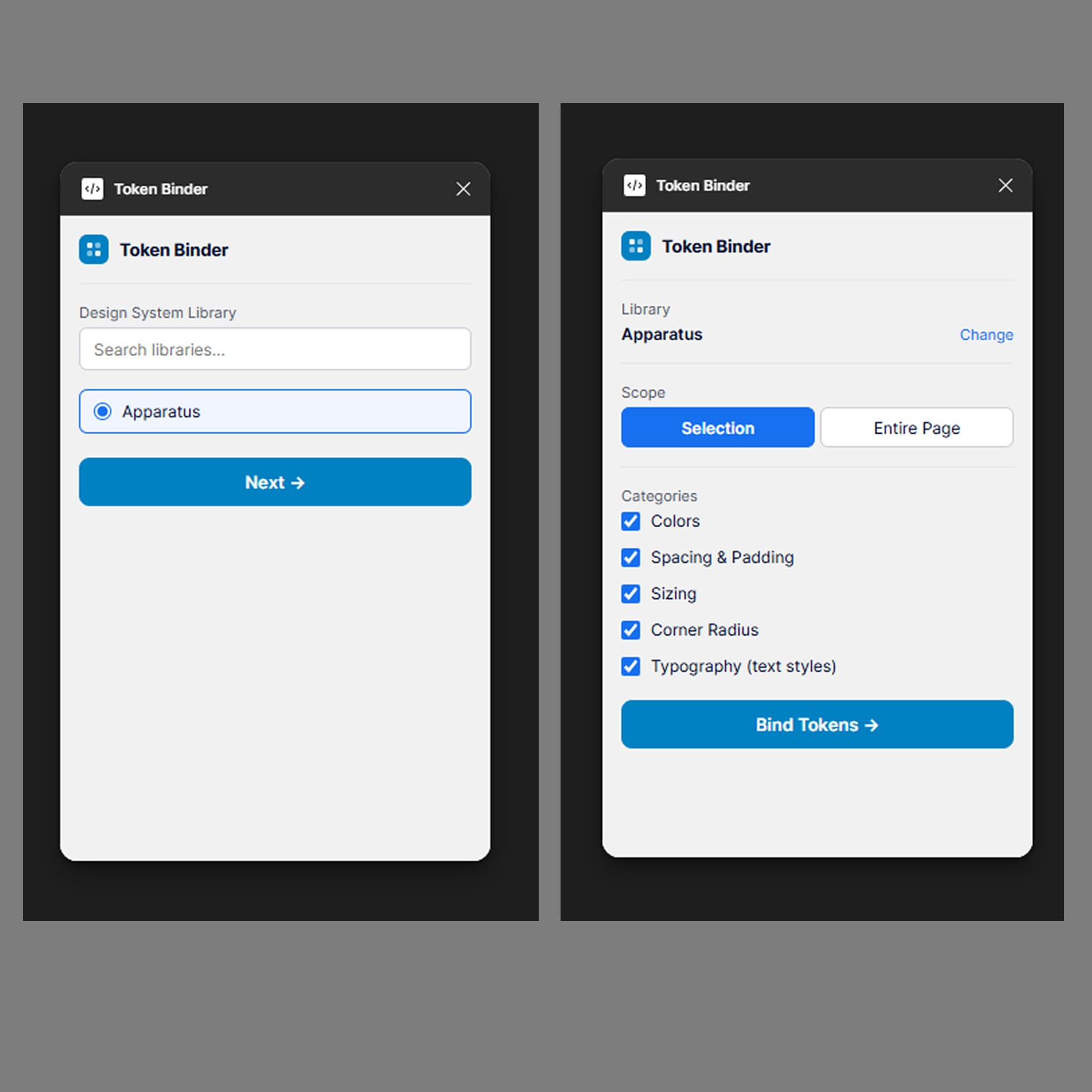

Glyph, Sweep, Token Binder. Sharp tools that solve specific repetitive work the round-trip skills don't cover. Each one I wished existed before I built it.

Internal workshops run

Two with the design team, one with the full 6labs team — PMs, devs and QA included. Live walkthrough of the round-trip, end to end, in under ten minutes.

People on the round-trip

Designers, PMs, devs and QA — running daily through the same Figma↔code loop. The workflow stopped being mine the moment the team had their own Claude Code setups pinned to the repo.

Mid-2024 onwards, every designer I knew “used AI.” Faster mocks, prettier copy, the occasional generated component. Acceleration, mostly.

The bet I wanted to make was different: AI as a teammate, not a tool. Not faster Figma — a workflow where the design moves through real code and back, with an agent doing most of the carry.

That bet only pays off if the substrate is right. A handoff-shaped workflow stays handoff-shaped no matter how clever the model is. The work had to change shape, not just speed up.

So the design question came before the tooling: what does a design system have to look like, for an agent to actually be useful inside it?

Agentic workflows produce slop almost everywhere — except where the design system itself is legible to both humans and agents. The honest sequence wasn't a master plan. It was a real-time adaptation:

Because the model is asked to read raw values, ad-hoc structure, and undocumented conventions — and produce production-grade work from that. It can't. Slop in, slop out.

Tokens instead of hex. Variants instead of nested frames. A live inventory it can query. Source-of-truth links in both directions. The system has to be readable — by both sides.

When Figma MCP shipped. The design system already existed; we rebuilt it to be agent-legible on top. Apparatus first, MCP second, the round-trip third. Not a master plan — an adaptation.

Two principles that fell out of this framing and shaped everything that followed:

Three layers, built in sequence. The system was retooled first — a year of audit and rework. Then the round-trip skills sat on top. Then the plugins covered the gaps. Workshops carried it across the team.

Six pillars — the prerequisites that turn raw model output into shipping work.

No raw hex, anywhere. Every fill, stroke, radius, spacing value, and typography ramp lives as a token. Agents get deterministic anchors instead of guessing at colours.

Full state matrix, per component. Every size × every state × every modifier — exposed as variants, not hidden in nested frames. The whole behaviour space, visible at once.

Every Figma node points home. Code Connect maps each Figma component to its Storybook story. The agent's first move — does this exist already? — gets a reliable answer.

The live inventory. Storybook isn't a side artefact — it's the source of truth, exposed via MCP so the round-trip skills query it before either side writes a frame or a line of code.

@figmaNode, @figmaUrl, @figmaPath at the top of every component file. Two halves of one component, one click apart, in either direction.

A skill that lints the system itself. Runs over every new design and flags raw values, off-token spacing, untagged variants. The system stays AI-readable because a skill keeps it AI-readable.

A feature flows brief → round-trip → parity → ship, and pilot feedback re-enters the next brief. The round-trip is where the design system breathes — Forge writes Figma from code, Land writes code from Figma, Tether keeps them linked. Parity is the gate before ship.

The skills run inside Claude Code; the plugins run inside Figma. Each one started as a sharp annoyance during real product work — “I keep doing this by hand” — and became a tool I reach for daily.

Generates Figma frames from the Storybook component inventory. Reads the live MCP, matches against existing library components via @figmaComponent sync tags, and only creates new nodes for unmatched ones. Tokens, never hex.

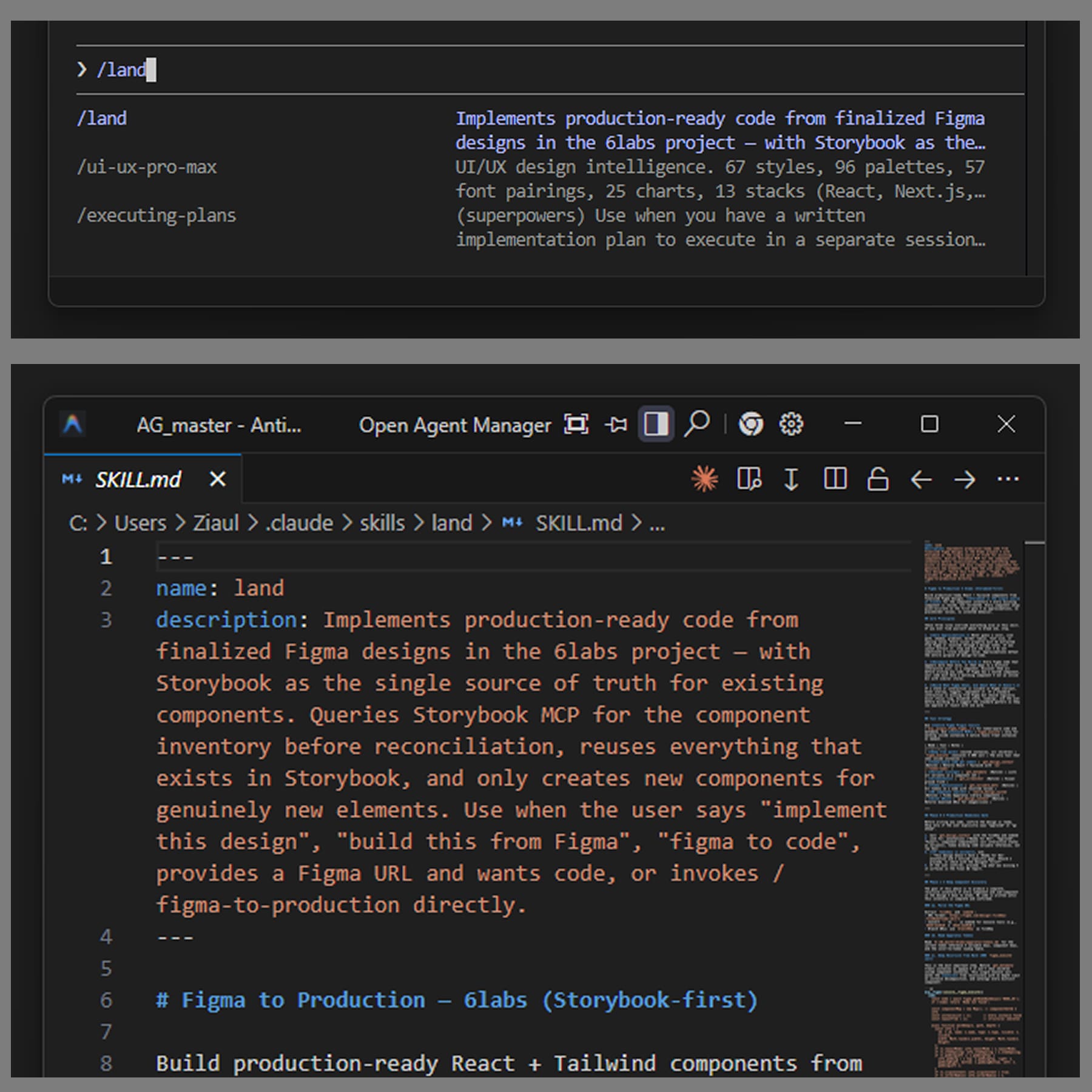

Implements production code from finalised Figma designs. Storybook is the single source of truth — Land queries the inventory before writing a line, reuses everything that already exists, generates only the genuinely new.

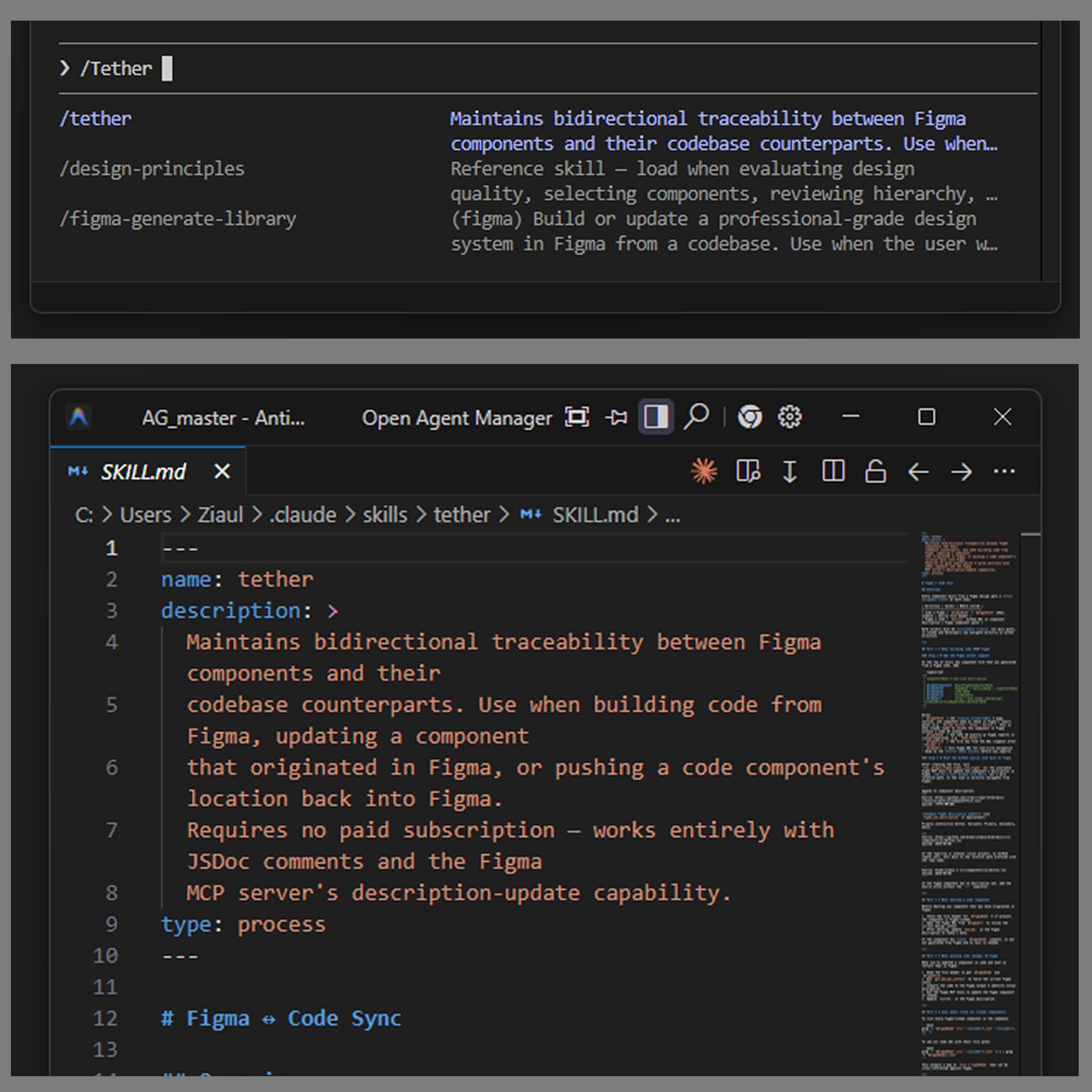

Keeps every Figma component and its codebase counterpart linked. JSDoc headers on the code side; source-link in the component description on the Figma side. Two halves of one component, one click apart.

Visual QA — diffs rendered code against the Figma source. Compares actual screenshots, audits state coverage, checks icon parity, writes a report into docs/design-qa/. Token PASS isn't visual PASS.

Flattens icon paths into outlined glyphs — strokes become fills. The set stays consistent across every screen and surface.

Bulk-moves components between pages without breaking instances. Library reorganisations stop being a multi-day chore and become a single click.

Binds remote design-system variables to layer properties at scale. The plugin I wished existed before I built it.

The skills only matter if other people use them. Three workshops, run over Google Meet, with three different audiences.

“Move the artifact toward the user — and let AI carry the work that used to be handoff.”

— from the AI-native workflow pitch, run with PMs, devs and QA on the 6labs team.Live: Forge → Land → Tether → Parity, in under ten minutes. Designers leave with a working Claude Code setup pinned to the repo and the four skills installed.

Pair-build a Figma plugin from a real annoyance. Most participants leave with a first plugin idea — usually the thing they were going to do by hand tomorrow.

The pitch run with PMs, devs and QA: the five workflow patterns, the substrate prerequisites, the adoption roadmap. The round-trip stopped being a design-team thing.

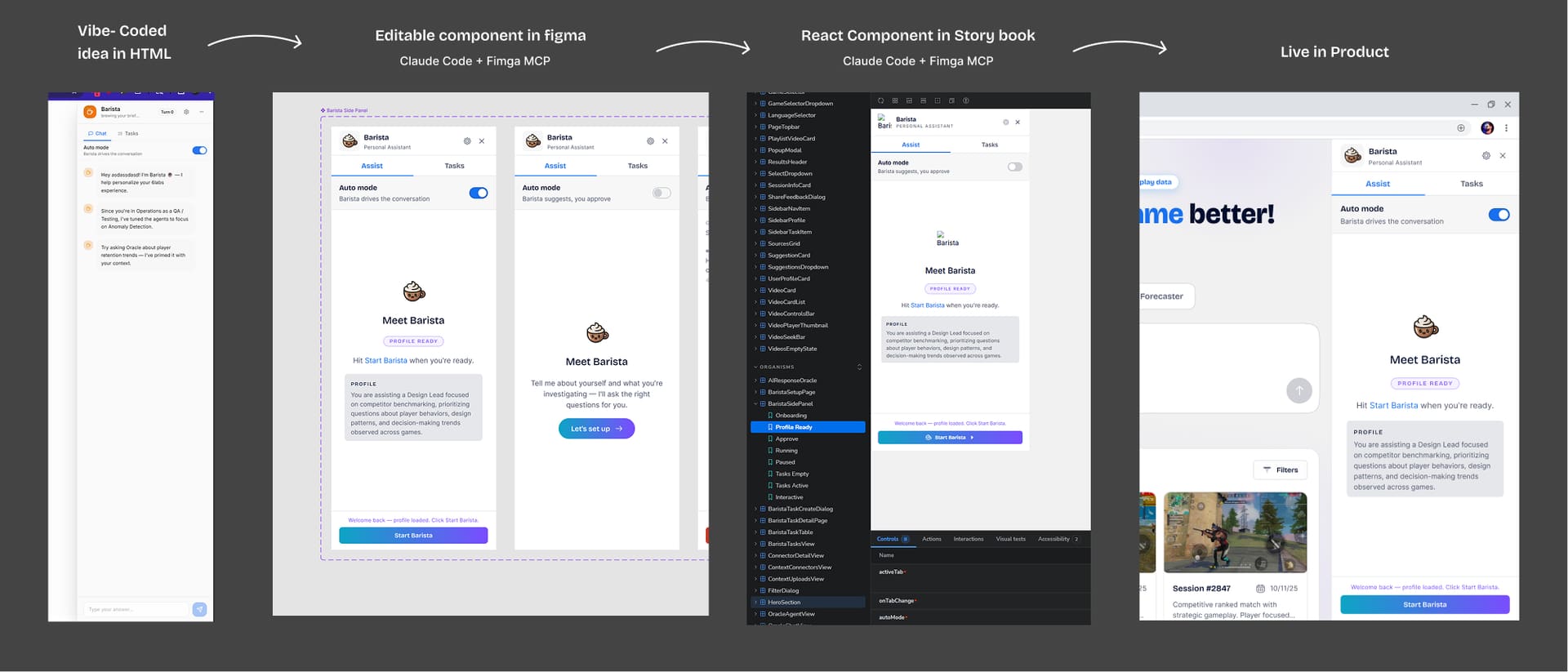

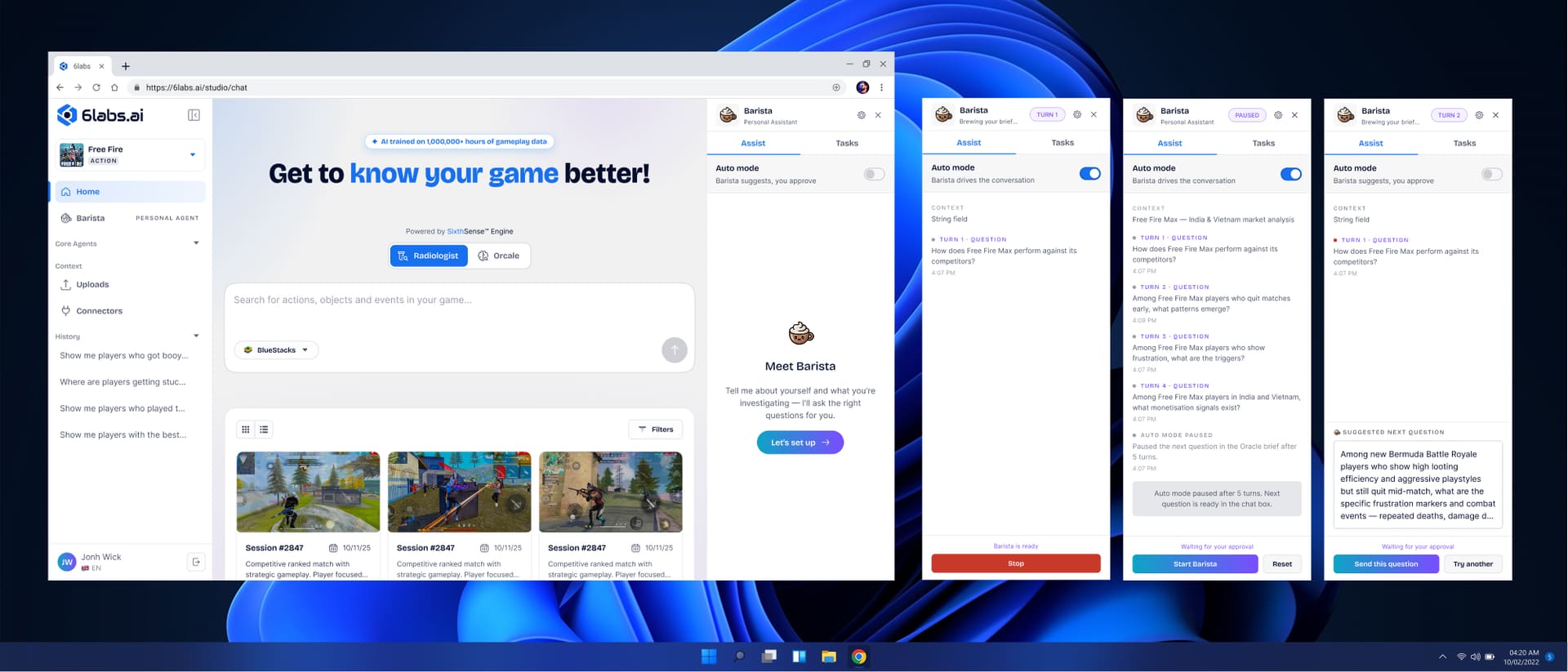

The product 6labs.ai was designed and shipped through this loop. The clearest evidence is one component that travelled the full round-trip — Barista, the assistant that proposes the question. Concept first written as HTML, brought into Figma through Forge, then implemented into the 6labs frontend through Land.

6labs.ai — the 4-agent AI platform companion to this case study — was designed and shipped through the round-trip. Not a theoretical workflow; a real product, live, with paying users in US, Japan, and Korea. The pipeline produced a product, not slop.

Barista — the assistant that proposes the question — is the first component to travel the full loop end-to-end. HTML concept lifted into Figma via Forge, refined as a DS-bound component, then implemented in the 6labs frontend via Land with 1:1 visual parity. The round-trip isn't a pitch deck — it's a commit history. (See §04 · FIG. 11A / 11B.)

Three workshops (W1–W3) landed the round-trip across functions. Designers, PMs, devs and QA now run the same Figma↔code loop daily, with their own Claude Code setups pinned to the repo. The workflow stopped being mine the moment the team had the keys.

The workshops happened, but later than they should have. Earlier sessions with devs and QA would have surfaced what they actually need from each skill, and let me lock a workflow where every function — design, PM, dev, QA — has a defined role and ownership in the loop. Build with the team, not for them.

Apparatus existed before the AI-readability work. Retrofitting it into a primitive → semantic → component token model cost real time. A proper 3-tier token system from the start would have made the system agent-legible from the first frame, instead of after a year of careful rework.

I have the conviction that the round-trip is faster, tighter, less defect-prone. I don't have the data — round-trip cycle counts, time-to-implement deltas, QA defect rates pre/post. With those numbers in hand, the company-wide pitch becomes a much louder one.